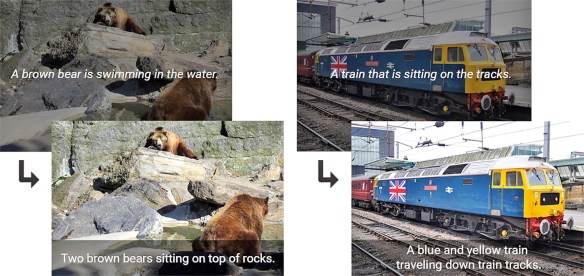

Images archived in digital libraries are either born digital or scans/photos of hard copy originals. This technology may be useful in enhancing images of historical photos and documents that are of low quality.

You know how in CSI, the cops always try to “enhance” a shot to zoom in and read (non-existent) details in photos? It’s amusing to the rest of us, but perhaps one day won’t be all that impossible, with artificial intelligence.

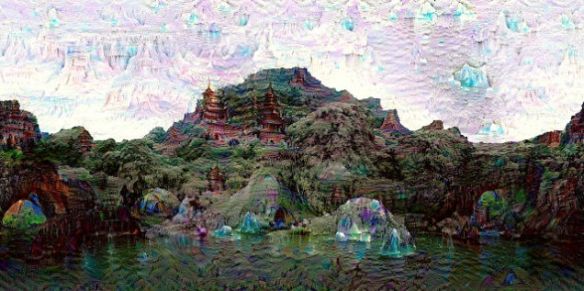

Researchers have been adopting neural networks and machine learning technologies to help computers fill in missing detail in photos.

Some consumer-ready websites are already making some of this magic accessible to you and me.

READ MORE: Website uses neural networks to enlarge small images, and the results are pretty magical | Mashable