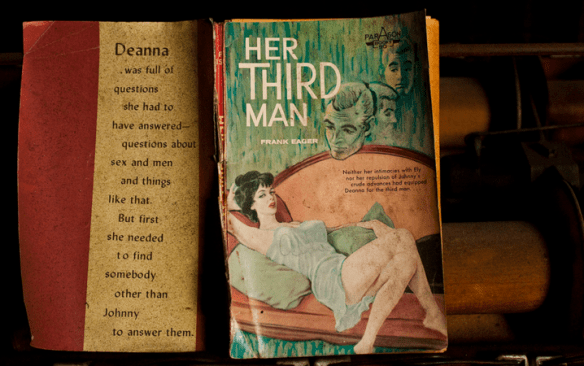

Samim Winiger, whose work we’ve covered recently–sent along his latest experiment. He used an open-source neural network that was trained on 14 million passages of romance novels by Ryan Kiros, a University of Toronto PhD student specializing in machine learning. Called the Neural-Storyteller, the network was trained to analyze images and retrieve appropriate captions from its vast store of sexy knowledge, creating “little stories about images,” says Kiros.

And what stories! Winiger fed the network a series of images, and it’s hard to even decide where to begin…Not all of the stories (or any of them, really) make perfect sense: What we’re seeing is an artificial neural network struggle to identify objects in a photo, and make links between images and the passages that it’s trained on. READ MORE: It Was Inevitable: Someone Taught a Neural Network To Talk With Romance Novels | Gizmodo